A unified psychological space for human perception of physical and social events

Image credit: Cog Psy

Image credit: Cog Psy

Abstract

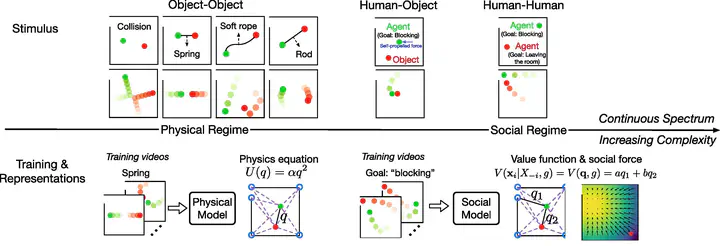

One of the great feats of human perception is the generation of quick impressions of both physical and social events based on sparse displays of motion trajectories. Here we aim to provide a unified theory that captures the interconnections between perception of physical and social events. A simulation-based approach is used to generate a variety of animations depicting rich behavioral patterns. Human experiments used these animations to reveal that perception of dynamic stimuli undergoes a gradual transition from physical to social events. A learning-based computational framework is proposed to account for human judgments. The model learns to identify latent forces by inferring a family of potential functions capturing physical laws, and value functions describing the goals of agents. The model projects new animations into a sociophysical space with two psychological dimensions: an intuitive sense of whether physical laws are violated, and an impression of whether an agent possesses intentions to perform goal-directed actions. This derived sociophysical space predicts a meaningful partition between physical and social events, as well as a gradual transition from physical to social perception. The space also predicts human judgments of whether individual objects are lifeless objects in motion, or human agents performing goal-directed actions. These results demonstrate that a theoretical unification based on physical potential functions and goal-related values can account for the human ability to form an immediate impression of physical and social events. This ability provides an important pathway from perception to higher cognition.